TL;DR:

- AI is now a competitive advantage in B2B research improving speed and lead qualification.

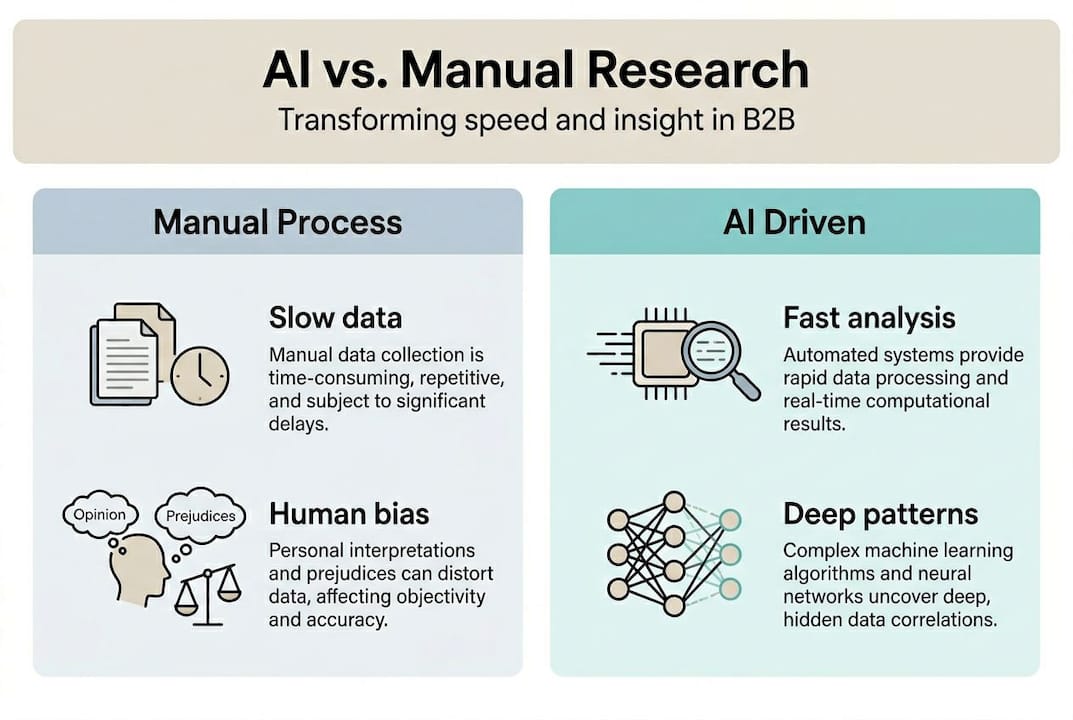

- Traditional methods face issues like slow processes, data silos, and complex stakeholder management.

- Successful AI adoption requires building data integration, human review processes, and a phased maturity model.

AI in B2B research is not a buzzword anymore. It is a measurable competitive advantage that mid-sized marketing and research teams are using right now to outpace slower rivals. AI enables rapid analysis of large datasets, transforms research workflows, and dramatically improves lead qualification. The gap between teams that have integrated AI into their research stack and those still relying on manual processes is widening fast. This article breaks down why traditional methods are failing, how AI directly fixes those failures, where human judgment still matters, and how to build a practical maturity model that delivers real ROI without blowing your budget.

Table of Contents

- Why traditional B2B research struggles today

- How AI changes the game for B2B research

- The human factor: When AI is not enough

- How to unlock AI value: A step-by-step maturity model

- The uncomfortable truth about AI for B2B research

- See how AI-powered research platforms accelerate B2B results

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI boosts research efficiency | AI solutions lead to faster, more accurate B2B market insights and more qualified leads. |

| Human oversight is essential | Expert input ensures AI-driven findings are relevant, accurate, and actionable for real business needs. |

| Integration drives ROI | Combining quality data, technology, and strategic collaboration delivers the highest return from AI investments. |

| Start small for success | Mid-sized firms get higher ROI by starting with focused use cases like lead scoring and expanding as they mature. |

Why traditional B2B research struggles today

To understand the benefit of AI, you need to see the limitations B2B teams currently face. The honest reality is that most conventional research processes were not built for the speed or complexity of modern markets. They were designed for a slower world, and they show it.

Manual data collection is time-consuming and introduces human bias at every stage. Analysts spend hours cleaning spreadsheets, reconciling mismatched data from different tools, and chasing down stakeholders for sign-off. By the time insights are ready, the market has already moved. Exploring key marketing research strategies reveals just how many teams are still stuck in these slow loops.

Siloed data is another major bottleneck. CRM data lives in one place, customer feedback in another, and competitive intelligence in a third. Stitching these sources together manually leads to incomplete pictures and delayed decisions. AI-native systems in agencies are already solving this by unifying data pipelines, but most mid-sized B2B teams have not caught up.

Then there is the stakeholder problem. B2B buying decisions involve 6-10 stakeholders, which means research needs to address multiple perspectives, priorities, and objections simultaneously. That complexity makes manual research exponentially harder.

Here is what B2B leaders consistently report:

- Slow turnaround times that make insights feel outdated on arrival

- Inconsistent data quality across departments and tools

- Difficulty aligning research outputs with the needs of diverse buying committees

- Missed windows for competitive response due to lengthy analysis cycles

"76% of B2B leaders cite data quality and integration as their primary obstacles to effective research."

The result is a research function that is perpetually behind, reactive rather than proactive, and unable to support the speed of modern B2B decision-making. That is the environment AI is stepping into.

How AI changes the game for B2B research

Having seen the pain points, let's see how AI directly addresses them. The shift is not incremental. It is structural.

AI processes large, complex datasets in minutes rather than weeks. It identifies patterns that human analysts would miss, not because analysts are not skilled, but because the volume of data is simply too large for manual review. Predictive analytics take this further by forecasting market shifts and surfacing buyer intent signals before a prospect even raises their hand. This is what makes AI-powered competitive intelligence so valuable for teams that need to act fast.

The performance numbers are striking. AI drives a 50-70% increase in qualified leads, cuts time-to-publish by 40%, and reduces sales cycles by 20-30%. These are not projections. They are outcomes being reported by B2B teams already using AI-enhanced services in their research workflows.

Here is a before-and-after comparison to make this concrete:

| Metric | Before AI | After AI adoption |

|---|---|---|

| Lead qualification rate | ~25% | 50-70% higher |

| Time-to-publish insights | 6-8 weeks | 40% faster |

| Manual rework hours | High | Reduced by 60% |

| Sales cycle length | 90+ days | 20-30% shorter |

| Research ROI | Baseline | 2x+ for optimized stacks |

For market intelligence examples that show these gains in practice, the pattern is consistent: teams that invest in AI for research see compounding returns as the technology learns from each cycle.

Pro Tip: If you are new to AI research tools, start with lead scoring and buyer intent detection. These two applications deliver the fastest and most measurable ROI for mid-sized firms, and they require the least organizational change to implement.

The human factor: When AI is not enough

Despite game-changing results, you still cannot go all in on AI without human judgment. This is where many teams make a costly mistake.

AI excels at execution: processing data, identifying patterns, generating first drafts of reports. But it struggles with nuanced strategy, creative thinking, and the kind of contextual judgment that comes from years of industry experience. Two-thirds of B2B marketers say AI is overhyped precisely because of these limitations, and 88% agree that AI output requires human correction before it is usable.

Here is a direct comparison of where each side excels:

| Capability | AI strengths | Human strengths |

|---|---|---|

| Speed | Processes data instantly | Slower but deliberate |

| Scale | Handles millions of data points | Limited by bandwidth |

| Pattern detection | Exceptional across large datasets | Strong for known patterns |

| Nuance and context | Weak, prone to hallucination | Strong, experience-driven |

| Creative strategy | Limited | Essential |

| Stakeholder empathy | None | Core competency |

Hallucination is a real risk. AI can confidently produce plausible-sounding but factually incorrect insights, especially when working with ambiguous or incomplete data. Without a human review layer, those errors can make it into reports and influence decisions. Teams that improve customer insights with AI consistently build in structured review checkpoints.

Here is the workflow that works:

- Use AI for the initial pass: data collection, pattern identification, and draft synthesis

- Insert human review for validation, context-checking, and strategic interpretation

- Iterate insights into action by combining AI speed with human judgment on final recommendations

Expert interviews and proprietary research remain irreplaceable for competitive differentiation. AI can analyze what is already public. It cannot replicate the insight you get from a 45-minute conversation with a key buyer.

How to unlock AI value: A step-by-step maturity model

It is not just about technology. Here is how teams can elevate their AI research maturity in a way that builds sustainable capability rather than chasing the next shiny tool.

The maturity model moves through three stages:

- Experimental: Run one or two AI pilots in low-risk areas like lead scoring or buyer intent analysis. Measure results carefully. Build internal confidence before expanding.

- Pilot: Expand to analytics and generative tasks such as automated reporting, survey synthesis, or competitive monitoring. Integrate AI outputs into existing workflows rather than creating parallel processes.

- Integrated AI stack: AI becomes part of the standard research operating model. Data flows automatically between tools, human review is structured and efficient, and insights reach decision-makers faster than ever before.

Data quality and IT partnerships are not optional at any stage. 76% of B2B teams report data quality as their top challenge, and teams that skip this foundation consistently underperform. Budget discipline also matters more than most teams expect. Mid-size firms achieve 2.1x ROI with budgets under €15K by focusing on essentials rather than overbuilding.

Pro Tip: Evaluate your AI research maturity every quarter using three core metrics: qualified lead volume, research cycle time, and rework hours. These three numbers tell you whether your investment is compounding or stalling.

For teams building toward scalable market intelligence, the maturity model is the fastest path from experimentation to reliable, high-ROI research operations. Tools like AgencyFlo and purpose-built AI research platforms can accelerate each stage significantly.

The uncomfortable truth about AI for B2B research

With this framework in mind, consider what really drives sustainable results. Most mid-sized B2B teams focus too much on finding the right AI tool and too little on the conditions that make any tool work.

The biggest gains do not come from buying more software. They come from improving data integration across departments, building genuine IT partnerships, and establishing disciplined human review processes. AI is an efficiency multiplier. It amplifies what is already there. If your data is fragmented and your review process is inconsistent, AI will produce faster versions of the same flawed insights.

Data quality and integration are the number one challenge for 76% of B2B teams, yet most AI adoption conversations skip straight to tool selection. That is backwards. The teams seeing the strongest returns are not necessarily using the most advanced AI. They are the ones who have done the unglamorous work of cleaning their data, aligning their teams, and building structured review cycles.

Future-ready research teams invest equally in technology and human expertise. They treat AI as a capable junior analyst, not an oracle. They use it to improve AI-driven research output through iteration, not by accepting first drafts as final. Sustainable success in B2B research is not an AI problem. It is an integration problem. Solve that first, and the AI will do its job.

See how AI-powered research platforms accelerate B2B results

Ready to put these insights to work? Here is where to start.

Gather is built specifically for marketing and research teams that need to move fast without sacrificing rigor. The platform automates study design, runs AI-moderated interviews, and delivers board-ready insights in days rather than months. It integrates directly with your CRM and existing data sources, so you are not starting from scratch.

Explore the full range of B2B AI research use cases to see how teams like yours are using Gather to cut research timelines and improve insight quality. You can also review the customer research crisis study for original 2026 data on how B2B teams are adapting. When you are ready to move from theory to practice, the AI-native research platform is the place to start.

Frequently asked questions

What AI capabilities are most useful for B2B research?

Predictive analytics, lead scoring, and buyer intent detection help B2B teams identify the right opportunities faster and prioritize outreach with greater precision.

How much human input does AI really need for B2B research?

AI can handle the initial 80% of research effort, but 88% of AI output requires human correction before it is ready for strategic use, making expert review a non-negotiable step.

Why do some B2B teams see higher AI ROI with smaller budgets?

Tighter budgets force focus on high-impact essentials. Budgets under €15K consistently deliver 2.1x ROI for mid-sized companies because they avoid overbuilding and prioritize the applications with the fastest returns.

What is the biggest risk of using AI for B2B research?

Poor data quality and lack of integration are the most common risks. 76% of B2B teams cite these as their top challenges, and they directly undermine the accuracy of AI-generated insights.