TL;DR:

- Effective AI-driven competitive intelligence relies on deliberate process integration and clear research questions.

- Multi-LLM workflows improve accuracy by comparing outputs and flagging divergence for human validation.

- Customization, proprietary data, and workflow discipline are essential for gaining true strategic advantage with AI.

Most marketing and business teams assume that plugging an AI tool into their research stack will instantly surface sharp, accurate competitive intelligence. It rarely works that way. The real advantage comes not from the tool itself but from how deliberately you integrate AI into your workflows. AI can deliver 92% data precision and 73% lower engineering cost per story point compared to manual workflows, but only when the process is designed with intention. This guide covers the fundamentals, advanced frameworks, platform comparisons, and expert pitfalls so your team can move from confusion to confident, rapid market insight.

Table of Contents

- What is AI-driven competitive intelligence?

- Frameworks and workflows: How AI delivers faster insight

- AI tool effectiveness and platform comparison for marketers

- Common pitfalls and expert strategies for using AI in CI

- Why most AI competitive intelligence outcomes miss the mark

- Discover how Gather supercharges your AI-driven research

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI increases speed and precision | AI can deliver actionable competitive intelligence in hours, not weeks, with 92% data precision. |

| Multi-LLM validation prevents errors | Combining outputs from several AI models helps avoid errors and boosts confidence in insights. |

| Platform selection is crucial | Choose AI CI tools for unique data, privacy safeguards, and workflow depth to avoid commodity outcomes. |

| Customize your AI workflows | Teams get the best results when they tailor AI processes and validate with domain expertise. |

What is AI-driven competitive intelligence?

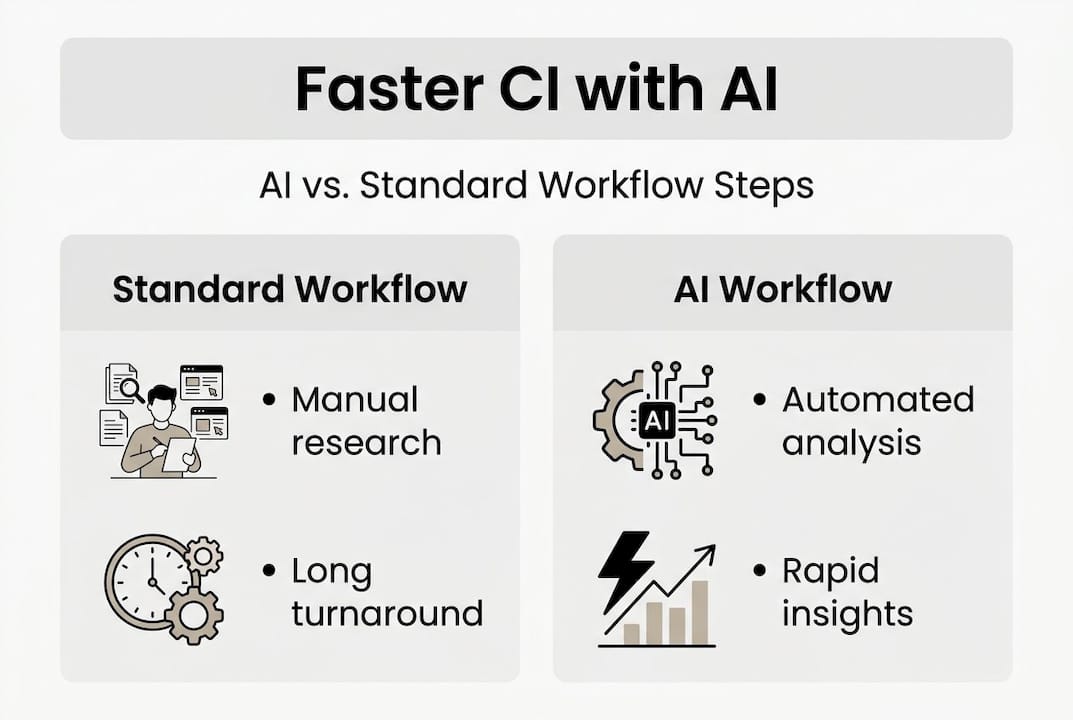

Competitive intelligence has always been about knowing what your rivals are doing before it affects your market position. Traditionally, that meant hours of manual research, analyst reports, and slow synthesis cycles. AI changes the equation entirely, but not in the way most people expect.

AI-driven competitive intelligence uses machine learning, natural language processing, and large language models to automate the collection, analysis, and synthesis of competitor data at a scale no human team can match. It is not a replacement for human judgment. It is an amplifier.

Here is what modern AI-driven CI actually does well:

- Trend detection: Scanning thousands of sources simultaneously to flag emerging market shifts before they become obvious

- Sentiment analysis: Measuring how customers, analysts, and media perceive your competitors in near real time

- Consensus mapping: Identifying where multiple data sources agree or diverge on a competitor's positioning

- Gap identification: Surfacing unmet needs or underserved segments your competitors are missing

- Speed and coverage: Processing data volumes that would take a human team weeks, in hours

The cost and efficiency gains are real. Companies using agentic AI systems have reduced manual engineering labor costs by 73%. That is not a marginal improvement. That is a structural shift in how intelligence teams operate.

For market intelligence examples that show this in practice, the pattern is consistent: teams that define clear research questions before deploying AI get dramatically better outputs than those who treat AI as a search engine.

The deeper opportunity is scalable intelligence with AI, where your research capacity grows without proportional headcount increases. That is the real competitive advantage.

"The difference between signal and noise in AI-driven CI is almost entirely a function of how well you define your inputs, not how powerful your model is."

Pro Tip: Before running any AI-driven CI workflow, write down the specific business decision the intelligence needs to support. Vague questions produce vague outputs, regardless of how sophisticated your tools are.

Frameworks and workflows: How AI delivers faster insight

Understanding what AI can do is one thing. Building a repeatable workflow that consistently delivers high-quality competitive intelligence is another. The teams getting the best results are not using a single AI tool. They are orchestrating multiple models in sequence.

The most effective approach right now is multi-LLM prompt orchestration. Multi-LLM approaches use ChatGPT, Claude, Gemini, and Perplexity for consensus mapping, with confidence ratings assigned based on how many models agree. When three or four models converge on the same finding, you have high confidence. Two models agreeing is medium confidence. Divergent outputs flag areas that need human review.

Here is how a structured multi-LLM CI workflow looks in practice:

- Input definition: Frame a precise competitive question tied to a real business decision

- Parallel querying: Send the same prompt to multiple LLMs simultaneously

- Aggregation: Collect and compare outputs, noting where models agree and where they diverge

- Validation: Cross-check high-confidence findings against primary sources or proprietary data

- Reporting: Synthesize validated insights into a structured format your stakeholders can act on

| Step | Standard workflow | Multi-LLM workflow |

|---|---|---|

| Research speed | Days to weeks | Hours |

| Coverage | Limited by analyst bandwidth | Broad, automated |

| Confidence scoring | Subjective | Consensus-based |

| Error detection | Manual review | Divergence flagging |

| Scalability | Low | High |

This workflow directly supports AI-driven customer insights by ensuring that findings are not just fast but also defensible. Speed without accuracy is worse than no intelligence at all because it creates false confidence.

For teams focused on driving better decisions, the consensus model is particularly powerful. It forces you to treat AI outputs as hypotheses to be validated, not conclusions to be acted on immediately.

Pro Tip: Run the same CI prompt across at least three LLMs before drawing conclusions. Divergent outputs are not failures. They are the most valuable part of the process because they tell you exactly where human judgment is needed.

The goal of insight delivery with AI is not to eliminate analysts. It is to focus their expertise on the 20% of findings that require it, while AI handles the other 80%.

AI tool effectiveness and platform comparison for marketers

Not every AI platform delivers the same quality of competitive intelligence. The market is crowded, and the differences between tools matter enormously when you are making strategic decisions based on their outputs.

Not all AI tools provide competitive advantage. Platform depth, privacy safeguards, and unique data access are what separate genuinely useful CI tools from expensive noise generators.

Here is a practical comparison of what to look for:

| Feature | Basic AI tools | Advanced CI platforms |

|---|---|---|

| Data precision | Variable | Up to 92% |

| Insight turnaround | 48 to 72 hours | Under 24 hours |

| Differentiator detection | 1x baseline | Up to 3.4x faster |

| Privacy controls | Minimal | Enterprise-grade |

| Unique data access | Public sources only | Proprietary integrations |

| Confidence scoring | None | Built-in |

When evaluating platforms for your team, ask these questions before committing:

- Does the platform access data sources your competitors cannot easily replicate?

- What privacy and data security certifications does it hold?

- Can it integrate with your existing CRM, POS, or customer data systems?

- Does it provide confidence scores or validation mechanisms for its outputs?

- How does it handle domain-specific terminology in your industry?

The 92% data precision and 24-hour turnaround benchmarks are achievable, but only with platforms built specifically for CI depth, not general-purpose AI assistants repurposed for research.

For teams building research strategies for advantage, platform selection is a strategic decision, not a procurement one. The wrong tool does not just waste budget. It produces misleading intelligence that can actively harm your positioning decisions.

If you want to see how organizations are navigating this in practice, the customer research crisis study offers a grounded look at what happens when research infrastructure fails to keep pace with business needs.

Common pitfalls and expert strategies for using AI in CI

Even well-designed AI workflows fail when teams underestimate the genuine risks. The pitfalls are not theoretical. They show up in real decisions made on flawed intelligence.

Hallucinations, bias, data privacy risks, and limited competitive differentiation are the leading AI pitfalls for CI teams. Each one deserves a specific mitigation strategy.

"Generative AI is trained on public data. If your competitors are using the same tools on the same data, your insights are not a competitive advantage. They are table stakes."

Here is how expert CI teams reduce these risks:

- Validate before acting: Never base a strategic decision on a single AI output. Cross-check findings against at least two independent sources, including primary research where possible.

- Audit for bias regularly: AI models reflect the biases in their training data. Periodically test your CI prompts against known outcomes to detect systematic skew.

- Protect proprietary inputs: Be deliberate about what data you feed into AI tools. Inputting sensitive customer or competitive data into public AI platforms creates real privacy exposure.

- Combine AI with primary research: AI excels at synthesizing public information. For insights that require depth, pair it with direct customer interviews or expert panels.

The marketing research process steps that work best treat AI as one layer in a multi-source intelligence stack, not the entire stack.

When selecting research methodologies, the question is not "should we use AI?" but "where does AI add the most value in this specific workflow?"

Pro Tip: Build your CI advantage on data sources your competitors cannot access, not on AI tools they can buy too. Proprietary customer data, integrated CRM signals, and direct interview insights are where durable competitive edges live.

Why most AI competitive intelligence outcomes miss the mark

Here is the uncomfortable reality most vendors will not tell you: the majority of AI-driven CI programs produce commodity insights. They surface the same trends, flag the same competitor moves, and generate the same strategic recommendations because they are all drawing from the same public data pools with the same off-the-shelf tools.

The organizations that genuinely win with AI in CI share one trait. They treat the workflow, the validation process, and the data sources as the competitive asset, not the AI model itself. The model is just the engine. The route you design determines where you end up.

What most teams miss is the need for customization at every layer: the prompts, the validation logic, the data inputs, and the synthesis format. Generic prompts produce generic outputs. Thoughtfully designed workflows produce insights that actually move strategy.

The path to improving insights with AI runs through process discipline, not platform upgrades. The teams getting the most value are the ones who have invested in designing their workflows as carefully as they would any other strategic capability.

Discover how Gather supercharges your AI-driven research

If these frameworks resonate but building them from scratch feels daunting, Gather is designed to close that gap. Gather is an AI-native research platform built specifically for marketing and business teams that need fast, reliable, and defensible competitive intelligence without the overhead of agency timelines or fragile manual workflows.

From automated study design to AI-moderated interviews and real-time structured analysis, Gather handles the full research lifecycle. Explore the Gather platform to see how it integrates with your existing data sources, or browse Gather use cases to find the CI workflows most relevant to your team. For a grounded look at what's at stake when research infrastructure lags, the customer research crisis study is worth your time.

Frequently asked questions

What types of competitive insights can AI deliver?

AI can surface trends, detect competitor moves, provide sentiment analysis, and identify market gaps with high speed and precision. AI systems achieve 92% precision and 24-hour turnarounds in delivering competitive insights when workflows are properly designed.

What are the main risks of using AI for competitive intelligence?

Key risks include hallucinated data, privacy concerns, similarity to competitor workflows, and depth limitations tied to model training. Common pitfalls include hallucinations and privacy risks as well as insufficient competitive differentiation and bias from underlying models.

How can teams validate AI-generated competitive intelligence?

Teams should triangulate findings across multiple AI models and cross-check with trusted sources before acting. Multi-LLM approaches build consensus and help identify outlier or divergent results that require human review.

Is AI competitive intelligence suitable for all industries?

Most industries benefit, but fields with sensitive data, unique jargon, or low digital footprints may need careful customization. AI's utility depends on data availability, privacy needs, and domain-specific expertise to deliver reliable outputs.