TL;DR:

- AI enables large-scale qualitative analysis in days, drastically reducing traditional time and cost barriers.

- It excels at structured tasks like sentiment scoring and theme extraction but struggles with nuance and context.

- Combining AI with human judgment creates a hybrid process that maximizes speed, reliability, and insight depth.

Qualitative research has long carried a reputation for being slow, expensive, and impossible to scale. But that assumption is being shattered. Anthropic recently completed 81,000 interviews across 159 countries in a single week, combining the depth of qualitative inquiry with a scale that traditional methods could never touch. For marketing and research professionals at mid-sized companies, this signals something important: the rules of qualitative research have fundamentally changed. This article breaks down exactly what AI can and cannot do in qualitative research, and gives you a practical framework for using it effectively.

Table of Contents

- The case for AI in qualitative research

- What AI does best: Accuracy, reliability, and speed

- What AI gets wrong: Nuance, risk, and context challenges

- Best practices: Integrating AI into your qualitative research process

- An expert perspective: Where AI truly changes the qualitative game

- Unlock faster, smarter research with Gather

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI supercharges scale | Modern AI enables the rapid analysis of massive qualitative datasets, delivering insights much faster than traditional methods. |

| Consistency and speed | AI systems excel in consistent coding and sentiment analysis, often outperforming human reliability benchmarks. |

| Human input remains vital | Critical weaknesses in nuance and context mean human oversight is essential for trustworthy qualitative research. |

| Hybrid approach wins | Combining AI's speed with human interpretation produces the best research outcomes for marketers and strategists. |

The case for AI in qualitative research

Traditional qualitative research has always been a trade-off. You get rich, nuanced insights, but you pay for them in time, money, and scope. A typical focus group or interview study might take six to twelve weeks from design to final report. Sample sizes stay small because every transcript requires hours of manual coding. For mid-sized companies without the budget of a Fortune 500 firm, this creates a painful gap: you need strategic insights, but the process to get them is too slow and resource-heavy to keep pace with real business decisions.

Those pain points are real and familiar:

- Slow timelines: Traditional studies rarely deliver results in under four to six weeks

- High cost per insight: Manual coding and analysis inflate project budgets fast

- Small sample sizes: Human bandwidth limits how many interviews can realistically be analyzed

- Difficult to scale globally: Coordinating multilingual research across markets adds complexity and cost

- Agency dependency: Many teams outsource research entirely, losing speed and control

AI addresses each of these directly. The 81,000-interview study mentioned above is not a stunt. It is a proof of concept that large-scale qualitative analysis, once thought impossible, is now achievable in days. AI can extract themes, identify sentiment patterns, and surface recurring signals across thousands of open-ended responses faster than any human team.

Stat to know: AI-powered qualitative studies can now analyze datasets in days that would take human researchers months to process manually.

For marketing teams, this unlocks real use cases: rapid theme extraction from customer feedback, fast synthesis of global audience data, and quick iteration on messaging concepts. Exploring competitive marketing research strategies becomes far more practical when your research cycle shrinks from months to days.

Pro Tip: AI does not replace the human researcher. It removes the bottleneck of manual processing so your team can focus on what humans do best: interpreting meaning, applying judgment, and connecting insights to strategy.

What AI does best: Accuracy, reliability, and speed

Once you understand why AI is appealing, the next question is: where does it actually outperform traditional methods? The answer is more concrete than most people expect.

A study published in Nature found that LLMs like GPT-4 outperform humans in inter-rater reliability for sentiment analysis, political leaning classification, and emotional intensity scoring, measured using Krippendorff's alpha. They also showed stronger temporal consistency, meaning they code the same data the same way across time, something human coders struggle with due to fatigue and drift.

Here is a practical comparison of how AI and human researchers stack up on common qualitative tasks:

| Task | AI reliability | Human reliability | Winner |

|---|---|---|---|

| Sentiment scoring | High | Moderate | AI |

| Theme/category coding | High | Moderate to high | AI (at scale) |

| Emotional intensity | High | Variable | AI |

| Sarcasm detection | Low | Low to moderate | Human |

| Contextual nuance | Low | High | Human |

| Minority view capture | Low | High | Human |

The pattern is clear. AI excels at structured, repeatable tasks where consistency matters. Humans excel where judgment, context, and emotional intelligence are required.

The most effective workflows use AI for the heavy lifting first, then bring humans in for refinement. Here is a practical numbered approach:

- Define your coding framework before running AI analysis

- Run AI on the full dataset to generate initial themes and sentiment scores

- Review a stratified sample of AI-coded responses manually

- Flag edge cases and anomalies for deeper human review

- Validate final themes against your original research questions

This approach, which supports improving customer insights with AI, gives you the speed of automation without sacrificing the quality that decision-makers expect. It also makes scalable market intelligence genuinely achievable for teams without large research departments.

Pro Tip: Always test your AI tool's reliability on a small labeled dataset before running it on your full study. A quick calibration check can catch systematic errors before they contaminate your entire analysis.

What AI gets wrong: Nuance, risk, and context challenges

Here is the reality check. AI is not a magic solution, and treating it like one is how research projects go wrong.

The same Nature study that praised AI's reliability also confirmed its significant limitations in qualitative research. Sarcasm detection showed low reliability for both AI and human coders, but humans at least bring social context and conversational awareness. AI lacks the rapport and spontaneity of a live interview, which means it can miss the emotional undercurrent that gives qualitative data its real value.

"AI can identify that a respondent used negative language. It cannot always tell you why they felt that way, or whether they were being ironic."

Beyond nuance, the risks in AI-assisted qualitative research include several categories that every research team should take seriously:

- Hallucinations: AI can fabricate quotes or invent patterns that do not exist in the data

- Bias amplification: If training data reflects skewed demographics, the model will reproduce and scale those biases

- Data privacy risks: Feeding sensitive customer data into third-party AI systems raises compliance concerns

- Black-box opacity: Many AI tools cannot explain why they assigned a particular code or theme

- Researcher agency loss: Over-reliance on AI output can erode critical thinking and methodological rigor

- Minority view suppression: AI optimizes for patterns, which means rare but important perspectives can get lost

For mid-sized companies handling customer data, the privacy dimension is especially critical. Reviewing AI-driven research compliance practices before deploying any AI tool is not optional. It is a baseline requirement.

Human review is not a nice-to-have layer on top of AI analysis. It is the mechanism that catches errors, surfaces minority insights, and ensures your findings hold up under scrutiny. The goal is not to eliminate human judgment but to redirect it toward the tasks where it matters most.

Best practices: Integrating AI into your qualitative research process

Knowing the risks is only useful if you have a clear plan to manage them. Here is how to build a hybrid research process that captures AI's speed without sacrificing quality.

Research from Child Trends showed that AI-assisted transcript analysis can rapidly identify themes and accelerate timelines while augmenting, not replacing, human methods. That word "augmenting" is the key. The goal is a workflow where AI handles volume and humans handle depth.

Step-by-step integration guide:

- Scope the project carefully: Define your research questions and success criteria before touching any AI tool

- Choose the right methodology: Not all qualitative tasks benefit equally from AI; match the tool to the task

- Prepare your data: Clean, anonymize, and structure your input data before processing

- Run AI analysis: Use AI for initial coding, theme extraction, and sentiment scoring

- Validate outputs: Empirical benchmarks confirm AI is superior in consistency for structured tasks, but outputs still require rigorous human review

- Apply human refinement: Have researchers review flagged responses, edge cases, and minority perspectives

- Document your process: Record which steps used AI and which used human judgment for transparency and reproducibility

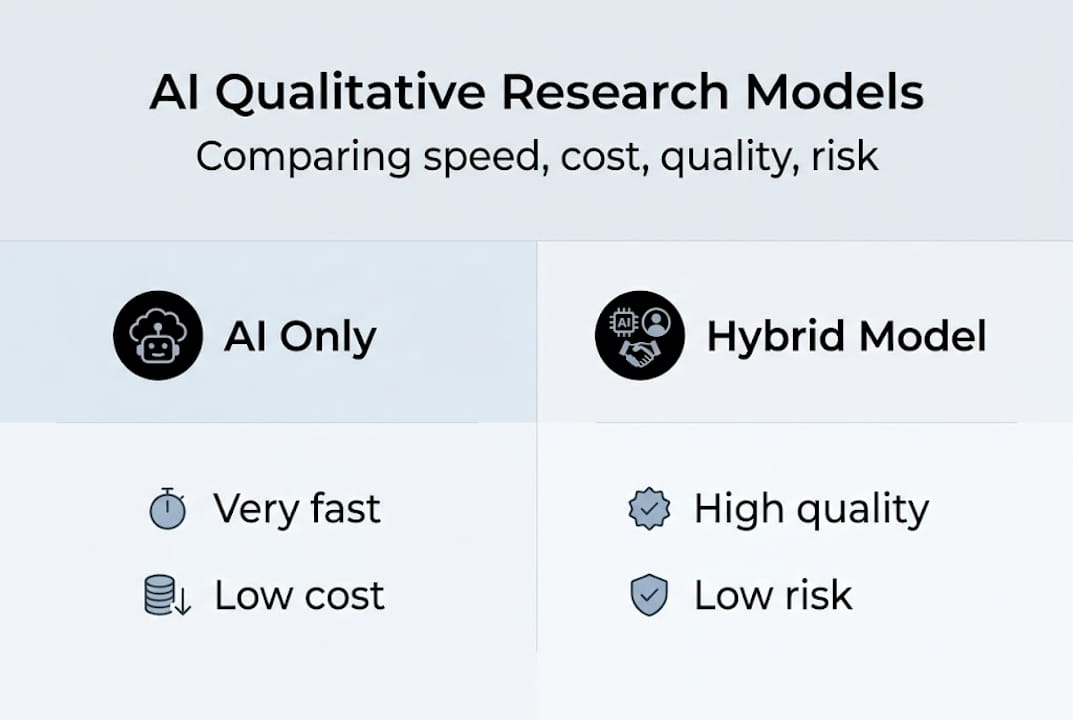

Here is a quick comparison of research approaches to help you choose the right model for each project:

| Approach | Speed | Cost | Quality | Risk |

|---|---|---|---|---|

| AI only | Very fast | Low | Moderate | High (no oversight) |

| Human only | Slow | High | High | Low |

| Hybrid | Fast | Moderate | High | Low (with validation) |

The hybrid model wins on almost every dimension when implemented correctly. Following steps to rapid research and selecting the right research methodology for each project type will keep your work both fast and credible.

Pro Tip: Build a bias audit into every AI-assisted project. Run a quick check on whether the themes AI surfaces are evenly distributed across demographic subgroups in your data. Skewed theme prevalence is often the first sign of a bias problem.

An expert perspective: Where AI truly changes the qualitative game

Most organizations fail at AI adoption in one of two directions. They either expect AI to do everything and are disappointed when it misses nuance, or they distrust it entirely and miss the genuine productivity gains it offers. Neither extreme serves your research goals.

The most useful mental model is to think of AI as a force multiplier. It does not change what good research looks like. It changes how much of it you can do, and how fast. A researcher who once spent three weeks manually coding 200 transcripts can now review AI-generated codes across 2,000 transcripts in the same time. That is not a replacement. That is leverage.

What this means practically is that AI's consistency advantages are most valuable when paired with researchers who have strong data auditing skills. The profession is shifting. The researchers who will outcompete their peers are not the ones who resist AI or the ones who trust it blindly. They are the ones who know exactly when to let the model run and when to override it.

Building strategic research advantages in 2026 means developing new skills: output validation, prompt design, bias detection, and hybrid workflow management. These are not technical skills reserved for data scientists. They are research skills for a new era.

Unlock faster, smarter research with Gather

The hybrid AI and human research approach described throughout this article is exactly what Gather is built to support. Gather's AI-native platform automates study design, interview execution, and insight delivery, giving your team board-ready findings in days, not months.

Whether you are running a customer research study or exploring AI research use cases for your specific market, Gather blends automation with the oversight your team needs to trust the results. The Gather research platform integrates with your existing CRM and customer data sources, so you can target the exact audience segments that matter to your strategy, fast.

Frequently asked questions

How does AI analyze qualitative data differently than humans?

AI automates coding and pattern detection across vast text datasets at consistent reliability, while humans deliver nuanced interpretation, emotional context, and judgment. Studies show GPT-4 outperforms humans on inter-rater reliability for structured tasks like sentiment scoring, but human oversight remains essential for depth.

What risks should marketers consider when using AI for qualitative research?

Key risks include misinterpreted nuance, hallucinated quotes, data privacy exposure, and amplified bias from skewed training data. Research documents that black-box opacity and researcher agency loss are also serious concerns requiring regular validation and human review.

Can AI replace qualitative researchers?

No. AI accelerates and scales the analysis layer, but humans are irreplaceable for interpreting emotional depth, capturing minority perspectives, and validating results. AI limitations in nuance, including low sarcasm detection reliability, confirm that human judgment is a structural requirement, not an optional add-on.

Where does AI offer the most value in qualitative research?

AI delivers the greatest value in rapid theme detection, sentiment scoring, and synthesizing large-scale datasets efficiently. Case study evidence shows AI-assisted transcript analysis accelerates timelines significantly while augmenting human methods rather than replacing them.